By the connectedness of Ω it follows that the set ∂Ω 0 ∩ Ω must be non-empty (otherwise Ω 0 would be open as well as closed, and thus identical to Ω).įix x 1 ∈ ∂Ω 0 ∩ Ω, and in turn let 0 be a point of Ω, as near to x 1 as we like, such that u( 0) 0 by the boundary point Theorem 2.8.3. Thus suppose for contradiction that Ω 0 ≠ Ω. The subset Ω 0 of Ω where u = M is then non-empty and relatively closed in Ω. Let u( x 0) = M and ℓ = sup | x| = R/2 u( x) 0 inī R \ B R / 2 ¯, while also w ⩽ 0 on ∂ B R and ∂ B R/2, provided m = M − ℓ.ī R \ B R / 2 ¯ by Theorem 2.8.1, so that ∂ v w( x 0) ≥ 0. U is continuous at x 0 and ∂ ϑu exists at x 0, where ϑ is the outer normal vector to Ω at x 0 (ii) Let u ∈ C 2(Ω) satisfy the differential inequality Lu ≥ 0 in Ω and let x 0 ∈ ∂Ω be such that (i) Suppose that the (symmetric) matrix = is uniformly positive definite in the domain Ω and that the coefficients a ij, b i = b i(x) are uniformly bounded in Ω. A discussion of the complexity of algorithms which calculate minimal and minimum fill-in under (R) can be found in Ohtsuki, Ohtsuki, Cheung, and Fujisawa, Rose, Tarjan, and Lueker, and Rose and Tarjan. Additional background on these and other matrix elimination problems can be found in the following survey articles and their references: Tarjan, George, and Reid. These graphs will be discussed in the next section. The unrestricted case was finally solved by Golumbic and Goss, who introduced perfect elimination bipartite graphs. Haskins and Rose treat the nonsymmetric case under (R), and Kleitman settles some questions left open by Haskins and Rose. Moreover, it says that it suffices to consider only the diagonal entries. Theorem 12.1 characterized perfect elimination for symmetric matrices. If M is stored in O( m) space, then the data structures needed for applying Algorithm 4.1 and 4.2 to G( M) can be initialized in O( m) time.

Let m denote the number of nonzero entries of matrix M.

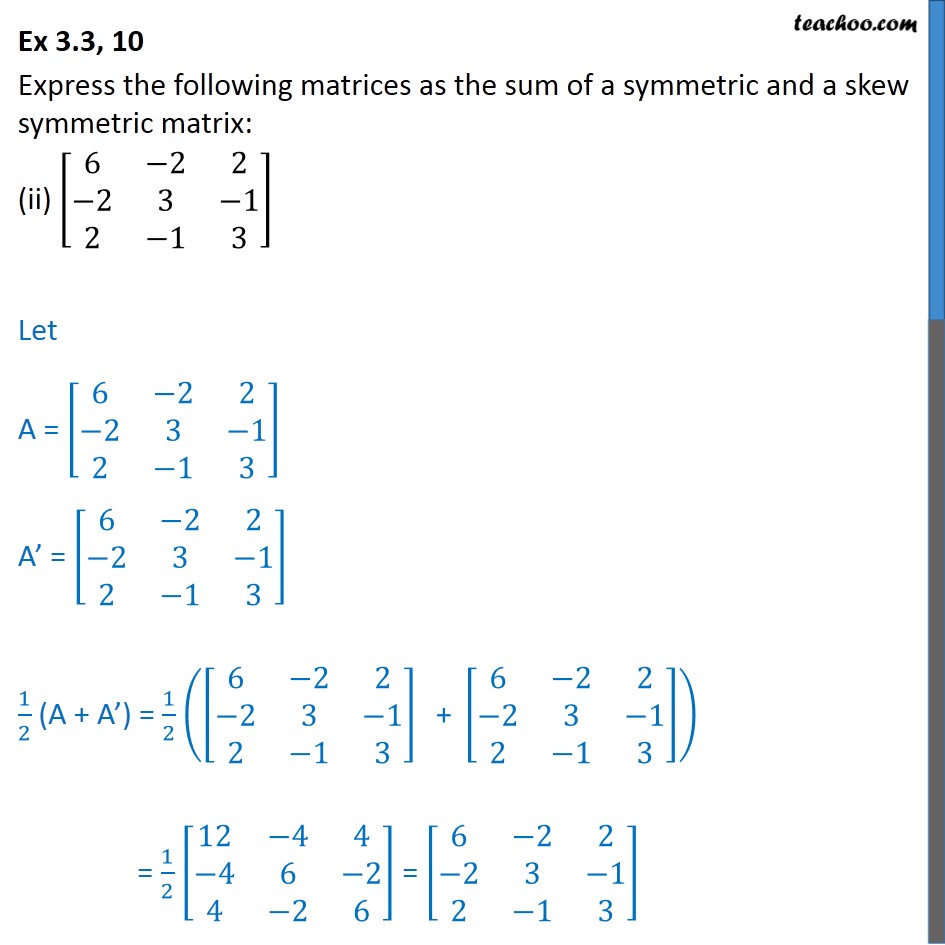

Corollary 12.2Ī symmetric matrix with nonzero diagonal entries can be tested for possession of a perfect elimination scheme in time proportional to the number of nonzero entries. Thus, we have that x α 1 y α 2 and x sy s are edges but x s y α 2 is not an edge, which contradicts the bisimpliciality of x α 1 y s. The lack of uniqueness of B was illustrated in the context of axis rotation in factor analysis.įigure 12.7. To find the rank of A, we simply count up the number of positive eigenvalues k and observe that r( A) = k ≤ min( m, n) if A is rectangular or r( A) = k ≤ n if A is square.įinally, if A is of product-moment form to begin with, or if A is symmetric with nonnegative eigenvalues, then it can be written–although not uniquely so–as A = B′B. In this case, all eigenvalues are real and nonnegative. If A is nonsymmetric or rectangular, we can find its minor (or major) product moment and then compute the eigenstructure. If A n×n is symmetric, we merely count up the number of nonzero eigenvalues k and note that r( A) = k ≤ n. The rank of any matrix A, square or rectangular, can be found from its eigenstructure or that of its product moment matrices. Furthermore, eigenvectors associated with distinct eigenvalues are already orthogonal to begin with. If the eigenvalues are not all distinct, an orthogonal basis–albeit not a unique one–can still be constructed. 13 Moreover, all eigenvalues and eigenvectors are necessarily real. Symmetric matrices appear naturally in a variety of applications, and typical numerical linear algebra software makes special accommodations for them.Where T′ = T −1 since T is orthogonal. Therefore, in linear algebra over the complex numbers, it is often assumed that a symmetric matrix refers to one which has real-valued entries. The corresponding object for a complex inner product space is a Hermitian matrix with complex-valued entries, which is equal to its conjugate transpose. In linear algebra, a real symmetric matrix represents a self-adjoint operator represented in an orthonormal basis over a real inner product space. Similarly in characteristic different from 2, each diagonal element of a skew-symmetric matrix must be zero, since each is its own negative. A is symmetric ⟺ for every i, j, a j i = a i j Įvery square diagonal matrix is symmetric, since all off-diagonal elements are zero.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed